AI Infrastructure Guide

AI Infrastructure in 2026: Chips, Cloud, and Capacity Choices

A practical AI infrastructure guide focused on compute access, cloud choice, serving design, networking pressure, and the capacity constraints that shape real deployment.

First-wave infrastructure guides

Use the hub for the market picture, then move into the company, cloud-provider, and inference pages that answer the next infrastructure choice.

AI Infrastructure Companies to Know in 2026

A practical guide to the providers, cloud platforms, and capacity players shaping AI infrastructure choices in 2026.

Best AI Cloud Providers for Startups and Model Teams

Compare AI cloud providers by capacity access, pricing shape, startup fit, and the tradeoffs model teams face in production.

AI Inference Infrastructure: What Actually Drives Cost and Latency

A guide to the serving, routing, caching, and networking choices that decide AI inference cost and response time.

Weekly newsletter

Get the weekly AI infrastructure brief

One email each week on chips, cloud capacity, inference cost, networking shifts, and the provider moves that affect AI builders and buyers.

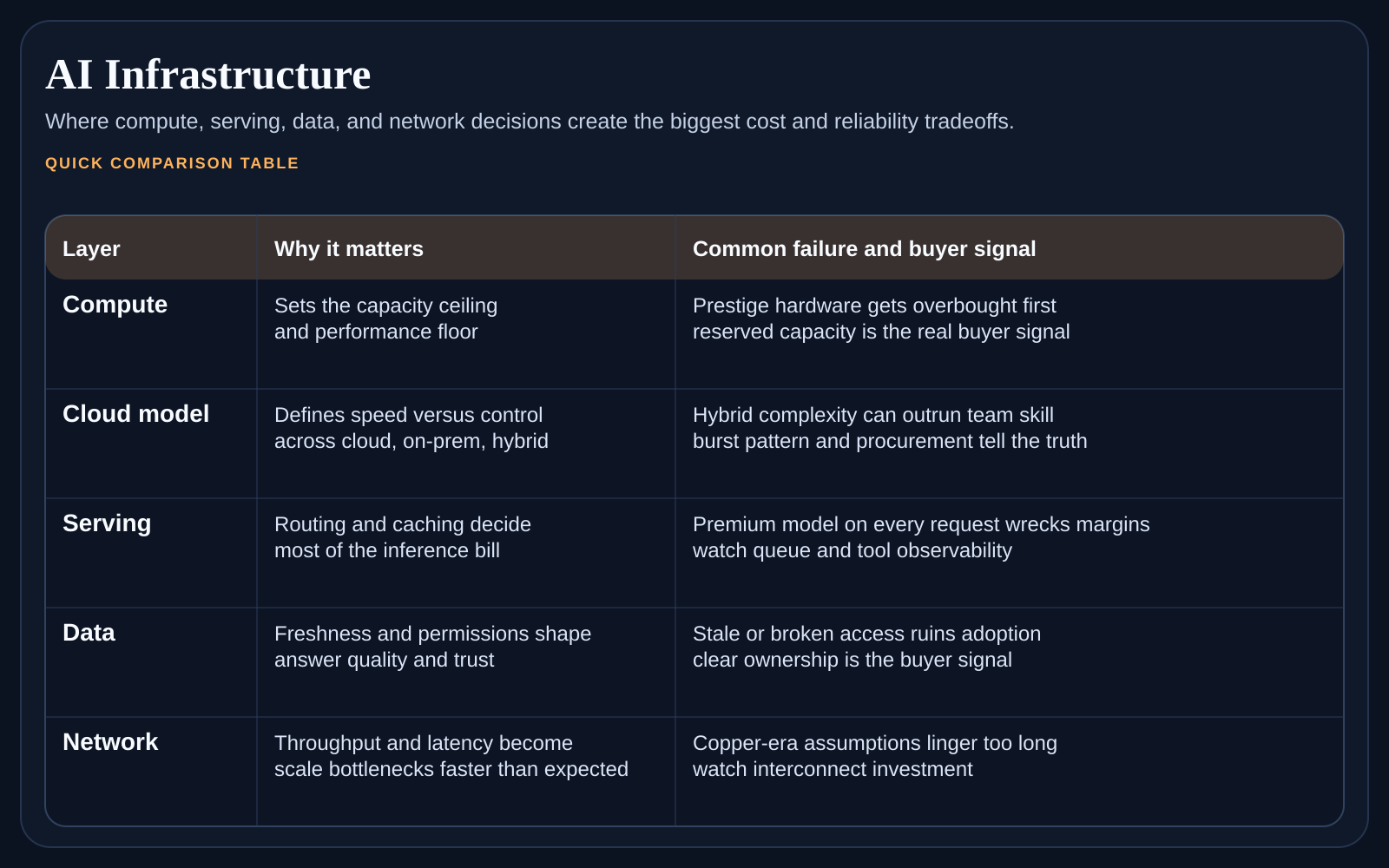

If you are searching AI infrastructure, the term covers more than chips. It is the full path from compute supply to model serving, networking, storage, and operational guardrails. This page is for buyers who need to understand where cost, latency, and availability really come from before they sign cloud contracts or redesign a production stack.

At a glance

The most useful shift in thinking is this. Infrastructure decisions should start from workload shape, not from vendor prestige. A team serving interactive inference has different needs from a team training models, running batch pipelines, or looking for startup-friendly cloud terms. The wrong comparison set can waste a month before procurement even begins.

The three AI infrastructure questions every buyer should answer

What kind of workload are we supporting: training, fine-tuning, batch inference, real-time inference, or a mix?

Is our main problem raw capacity access, cost stability, deployment speed, or control over the serving stack?

How much of the stack do we want to manage ourselves versus buying as a managed service?

Which parts of the system create the real bottleneck today: compute, routing, storage, networking, or governance?

Choose the next page by bottleneck

If the shortlist itself is unclear, start with the infrastructure-companies page.

If the main decision is where to run workloads, start with the cloud-provider page.

If the workload is already live and margins are under pressure, go straight to the inference-cost page.

If multiple bottlenecks exist, solve them in that same order so the evaluation stays focused.

Use the right child page for the real decision

Read AI Infrastructure Companies to Know in 2026 if you need a market map of the important players. Move to Best AI Cloud Providers for Startups and Model Teams when the next decision is where to run workloads. Finish with AI Inference Infrastructure: What Actually Drives Cost and Latency when serving economics and response time are the pressing issue.

Why infrastructure buying is getting harder

Because provider categories are blending together. GPU clouds are adding platform features. Hyperscalers are tightening their AI stories. Inference providers are moving up the stack. Buyers cannot assume that a company is only one thing anymore, which means the evaluation has to start from your operating need, not the vendor label.

FAQ

What is AI infrastructure?

AI infrastructure is the compute, cloud, storage, networking, serving, and operational layer that lets models run in production. It covers more than hardware because the same model can feel cheap or expensive, fast or slow, depending on how the rest of the stack is designed.

Should startups start with raw infrastructure or a managed layer?

Most startups should start with more managed help unless control itself is the product advantage. The simpler path usually wins early.

What is the biggest infrastructure evaluation mistake?

The biggest mistake is comparing vendor brands before defining the workload. Without that step, every sales process becomes noisy and hard to rank.

How to use this cluster

Treat this page as the top-layer map. Once you know whether your problem is provider selection, cloud fit, or inference design, jump to the matching child page and come back here when you need the broader context again. That keeps the research process narrow and useful instead of sprawling.