AI Infrastructure Guide

AI Infrastructure Companies to Know in 2026

A current guide to the top AI infrastructure companies in 2026, from hyperscalers and GPU clouds to stack specialists, serving platforms, and the providers shaping capacity access.

Read this next

Use the hub for the broad capacity view, then move across the sibling pages when you need a provider shortlist or a tighter answer on inference cost.

Best AI Cloud Providers for Startups and Model Teams

Compare AI cloud providers by capacity access, pricing shape, startup fit, and the tradeoffs model teams face in production.

AI Inference Infrastructure: What Actually Drives Cost and Latency

A guide to the serving, routing, caching, and networking choices that decide AI inference cost and response time.

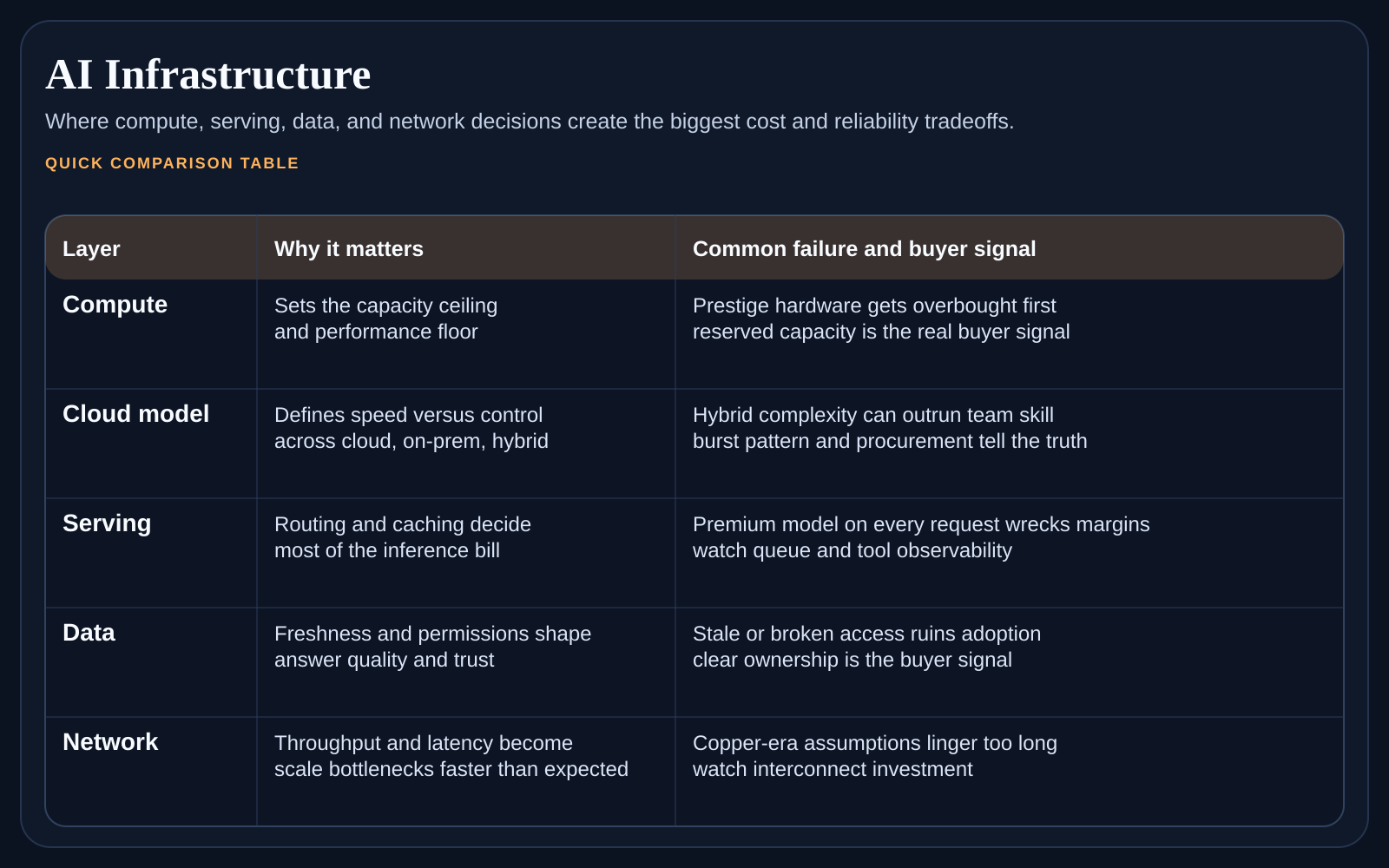

A lot of buyers search for the top AI infrastructure companies when what they really need is a category map. The most important providers in 2026 do not all look alike. Some sell raw or near-raw compute access. Some sell managed deployment layers. Some win because of relationships with model labs or startup ecosystems. The useful question is which category of AI infrastructure company solves your bottleneck.

At a glance

The cleanest way to read this market is by role. Hyperscalers matter because they tie AI to broad enterprise contracts. Specialist GPU clouds matter because they promise speed and focus. Managed platform players matter because they reduce the operational burden after capacity is secured. Networking and systems companies matter because they shape the performance ceiling under load.

Top AI infrastructure companies by category

Hyperscalers for broad enterprise buying power, ecosystem integration, and long contract depth.

Specialist AI clouds for faster access to accelerators and a more focused operating model.

Managed AI platform vendors for teams that want less infrastructure work after the initial contract.

Stack-layer specialists in networking, serving, or data movement for buyers solving very specific bottlenecks.

Which companies matter depends on your workload

A startup shipping its first inference product may care most about ease of deployment, startup credits, and a sane support path. A model lab or platform team may care far more about reservation access, multi-region capacity, and low-level control. That is why the same company can look essential to one buyer and irrelevant to another.

What to ask when comparing providers

Can the provider actually support our workload shape and growth path, or only the first stage of it?

How much of the stack do they manage once the hardware is provisioned?

Are we paying for convenience, for scarcity access, or for a real operational advantage?

If the market shifts fast, how hard will it be to move or add a second provider later?

How buyers usually build the first shortlist

Startups often shortlist one hyperscaler, one specialist AI cloud, and one more managed platform option.

Model teams often shortlist providers based on capacity quality, control level, and support for unusual workload patterns.

Enterprise buyers often shortlist around procurement fit first, then narrow by technical match.

If a provider only looks good in one stage of your growth path, mark that clearly before signing long commitments.

What changed recently in the provider landscape

Provider positioning is shifting quickly. More hyperscalers are tightening model-serving bundles, specialist clouds are expanding managed features, and buyers are seeing more overlap between infrastructure categories. If your shortlist has not been reviewed in the past 30 days, assume at least one provider moved on pricing shape, packaging, or service boundary.

Recheck capacity commitments and reservation terms before signing, because packaging changes can alter the real effective price.

Verify managed-service boundaries, some providers now include more operations help, others still expect in-house platform ownership.

Confirm migration friction up front, especially data-egress costs, deployment coupling, and control-plane dependencies.

Track where support quality changed in practice, incident response quality matters more than feature-checklist parity.

FAQ

Do hyperscalers always beat specialist clouds?

No. Hyperscalers win some buying contexts because of contract depth and ecosystem breadth, but specialist clouds can win on focus, support, and faster access.

Should a startup care about multi-provider flexibility right away?

It should care enough to avoid a dead end. Full multi-cloud design can wait, but a painful migration trap is still worth avoiding early.

Where to go next in the cluster

Once you know which companies deserve a shortlist, compare hosting environments on Best AI Cloud Providers for Startups and Model Teams. If your real pain sits in serving economics after the workload is live, continue to AI Inference Infrastructure: What Actually Drives Cost and Latency. For the full market frame, return to AI Infrastructure in 2026.

Weekly newsletter

Get the weekly AI infrastructure brief

One email each week on chips, cloud capacity, inference cost, networking shifts, and the provider moves that affect AI builders and buyers.