Enterprise AI Resource Cluster

Enterprise AI in 2026: Use Cases, Governance, and Rollout

A current enterprise AI guide for leaders comparing use cases, governance controls, and rollout sequencing before adoption scales.

First-wave pages

Use the hub for the operating model, then move into the pages on workflow use cases, governance controls, and rollout sequence.

Enterprise AI Use Cases for Finance and Operations

A practical guide to where enterprise AI is landing first in finance, planning, supply chain, and operations work.

Enterprise AI Governance Checklist for 2026

A checklist for audit trails, approvals, identity controls, and policy decisions that shape enterprise AI rollouts.

AI Rollout Checklist for Mid-Sized Companies

A rollout checklist for mid-sized companies deciding where to start, how to govern access, and how to phase adoption without adding noise.

Weekly newsletter

Get the weekly enterprise AI brief

One email each week on enterprise copilots, governance shifts, rollout lessons, and vendor moves that affect operators.

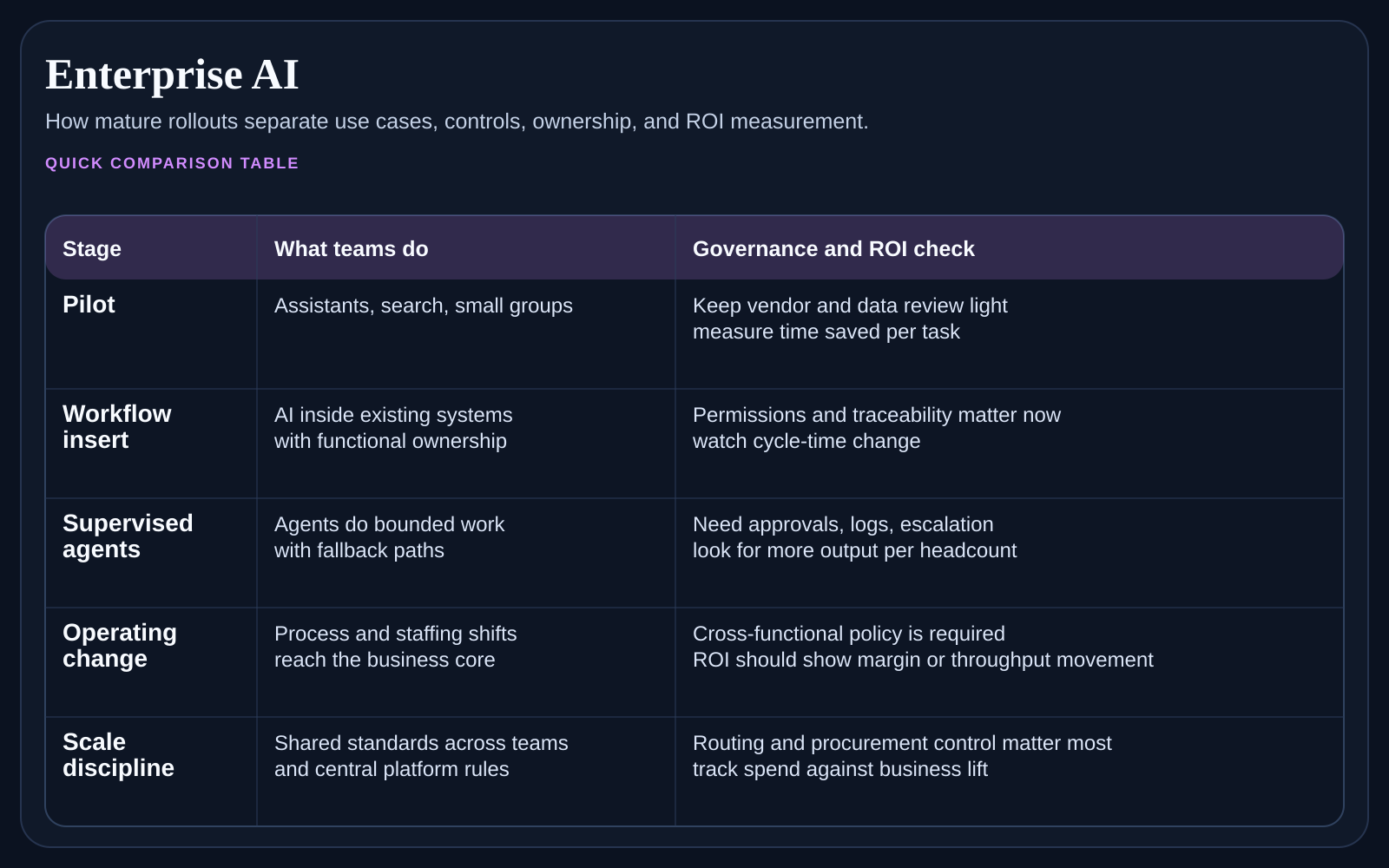

If you are searching enterprise AI, the hard part is not defining the phrase. It is deciding where the program should start, which controls have to exist first, and how to prove business value without forcing every department into a rushed rollout. Enterprise AI is now an operating-model decision as much as a tooling decision.

At a glance

If you are looking for a clear starting point, this is it. In 2026, the strongest enterprise AI programs start in repeatable workflows, not in vague companywide mandates. They pair narrow wins in finance, operations, or support with approval rules, identity controls, and a realistic rollout order.

What enterprise AI leaders should settle first

Which workflows can show time savings, error reduction, or throughput gains within one quarter.

Which data sources and business systems the AI layer can reach without unsafe copy and paste habits.

Which approval, audit, and model-access rules must exist before usage grows.

Which executive owner will keep the program tied to operating outcomes rather than novelty metrics.

Best entry point by company situation

If leadership keeps asking where AI should start, begin with the use-case page and narrow the first workflow.

If legal, security, or audit is slowing decisions, go straight to the governance checklist before expanding the pilot.

If a mid-sized company already has one promising workflow but no rollout discipline, use the rollout checklist first.

If all three problems exist at once, solve them in that order: use case, governance, rollout.

The three pages that matter most in this cluster

Use Enterprise AI Use Cases for Finance and Operations to narrow the first workflow targets. Move next to Enterprise AI Governance Checklist for 2026 to understand the controls that should exist before adoption widens. Finish with AI Rollout Checklist for Mid-Sized Companies if your company needs a practical sequence instead of a broad strategy deck.

Why many enterprise AI efforts stall

They stall because companies try to solve every use case and every policy question at once. A better pattern is to start with one or two workflow families, define what success looks like, and then expand only after identity, logging, and ownership are stable. Enterprise AI works when the company is honest about what it can govern today.

FAQ

What does enterprise AI usually mean in practice?

In practice, enterprise AI usually means applying models to repeatable business workflows with clear ownership, auditability, and measurable outcomes. It is less about a single assistant and more about how the company chooses, governs, and scales useful automation.

Should enterprise AI start with a companywide assistant?

Usually no. A broad assistant can create interest, but the clearest business wins usually come from narrower workflows with measurable before-and-after results.

What is the first metric leaders should watch?

Start with a workflow metric that operators already respect, such as turnaround time, exception volume, backlog age, or hours spent per completed task.

How to use this cluster

Read the sibling page that matches the decision already in front of you. If the team is arguing about where to start, use the use-case page. If security or legal is slowing the program down, go to the governance checklist. If management wants a rollout sequence for a mid-sized business, use the rollout checklist directly and come back here for context.